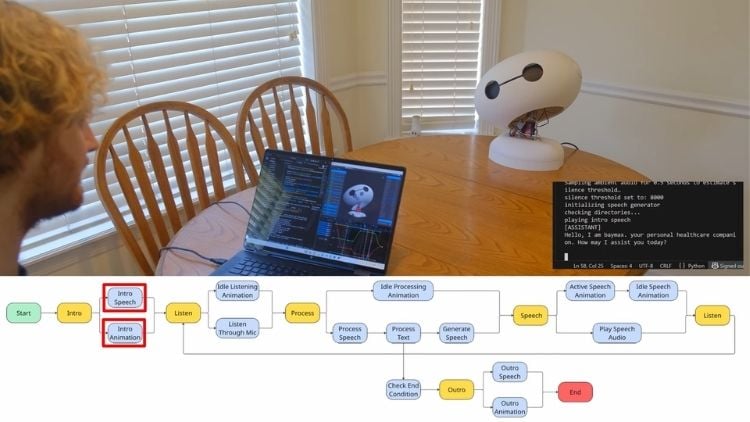

Maker Brae Barnes is developing a conversational Baymax-style robot that combines animatronic motion with an AI-driven speech system. The current prototype focuses on the head assembly, featuring articulated neck movement, blinking eyelids, and the ability to respond to spoken input using a speech-processing pipeline. The goal is to create a robot that not only talks but also conveys expression through physical motion, similar to professional animatronics.

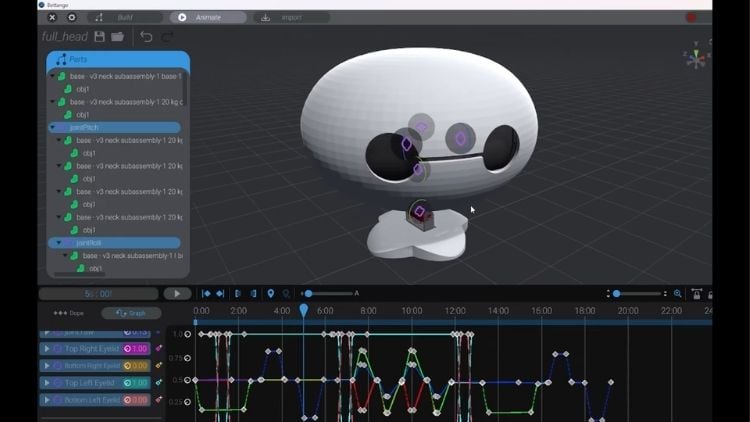

From a technical perspective, the mechanical implementation is where most of the engineering effort is evident. The three-axis neck mechanism provides full rotational freedom, but the more notable feature is the eyelid system. A 3D-printed linkage driven by separate actuators allows independent eyelid motion, which significantly improves perceived expressiveness.

The control system follows a structured animatronics approach, where predefined motion sequences are triggered based on conversational context. Motion interpolation between setpoints helps avoid abrupt servo transitions, resulting in smoother and more natural movement. On the AI side, the system uses a standard pipeline, speech-to-text, processing, and text-to-speech. but there is still a noticeable response delay during interaction. While interim animations help mask this latency, the delay is perceptible and highlights the current limitations of real-time inference in such systems. Overall, the project reflects a practical integration of mechanical design, control systems, and AI, with further challenges expected as it scales beyond the current head-only implementation.