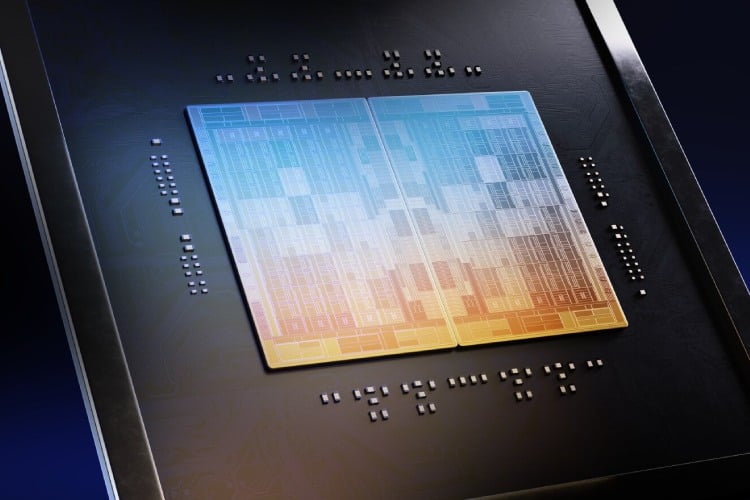

Arm has introduced the AGI CPU, its first internally developed data center processor, marking a transition from its long-standing IP licensing model to delivering complete silicon solutions for cloud infrastructure. The launch is closely aligned with the growing demands of artificial intelligence workloads, particularly those requiring sustained, multi-step computation rather than traditional burst-style processing. By entering the data center CPU market directly, Arm is positioning itself alongside established server processor vendors with a design tailored specifically for modern AI systems.

The AGI CPU is based on Arm’s Neoverse V3 architecture and is engineered for what the company terms “agentic AI” workloads. These include applications that require continuous orchestration, reasoning, and parallel task execution. To support this, the processor integrates up to 136 cores per CPU, with each core capable of handling a dedicated program thread. Arm reports memory bandwidth of up to 6 GB/s per core with sub-100 ns latency, a combination intended to sustain high-throughput workloads without the bottlenecks typically seen in shared-resource designs. In terms of system-level characteristics, the processor operates within a 300 W thermal design power envelope while maintaining deterministic performance under sustained load. The architecture avoids idle-thread inefficiencies by assigning a dedicated core per thread, which is particularly relevant for large-scale AI pipelines where predictable execution is critical. Arm also emphasizes scalability at the rack level, supporting high-density 1U server configurations with air cooling and significantly higher core counts in liquid-cooled deployments.

The company highlights deployment flexibility as a key advantage. Air-cooled systems can scale to approximately 8,000 cores per rack, while liquid-cooled configurations can exceed 45,000 cores per rack. This level of density is aimed at improving overall workload consolidation and accelerator utilization, allowing data centers to extract more usable compute within existing power and space constraints. Arm further claims that the AGI CPU can deliver more than twice the performance per rack compared to traditional x86-based systems, potentially translating into substantial capital expenditure savings at hyperscale. Arm has also outlined a broad ecosystem forming around the AGI CPU at launch. Meta is identified as a primary development partner, contributing to the co-design of the platform to align with real-world AI infrastructure requirements. Additional launch partners include Cerebras, Cloudflare, F5, OpenAI, Positron, Rebellions, SAP, and SK Telecom, indicating active interest across cloud, networking, and enterprise domains. On the hardware side, system vendors such as ASRock Rack, Lenovo, and Supermicro are preparing server platforms based on the new processor, suggesting that deployment readiness extends beyond the silicon itself.