By Jaseem Akhtar

Introduction

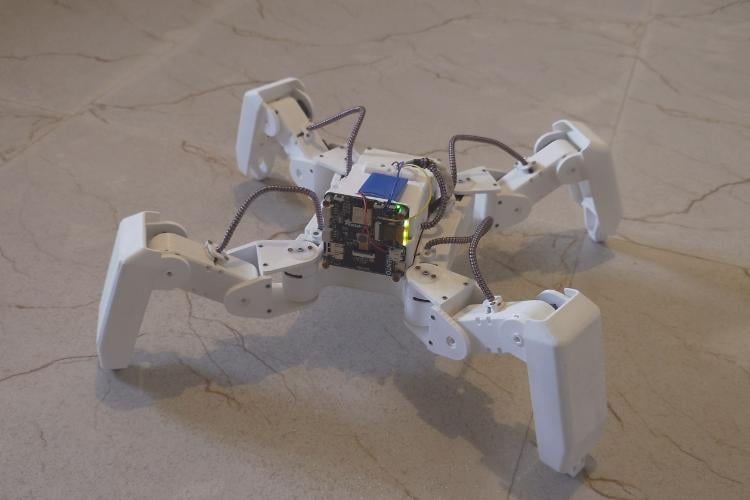

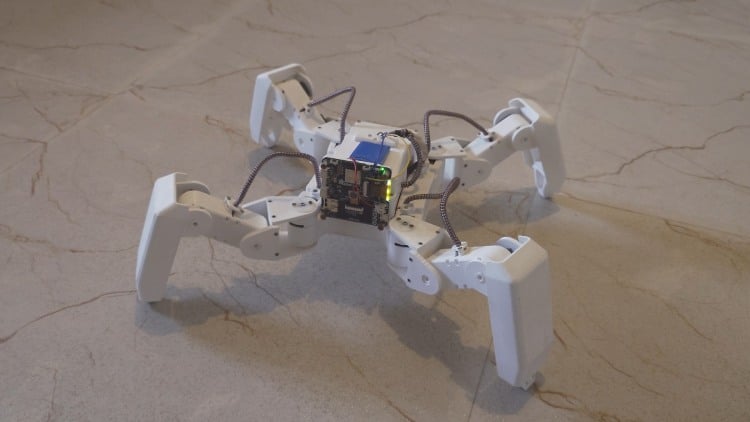

A smart cute, friendly, intelligent robot pet. Desk companion, helps you in almost everything - reminding of medication, understanding behavior and emotion, helping in loneliness and anxiety, etc. Fully remote controllable with also sense of environment with Camera and AI model as brain for making decisions.

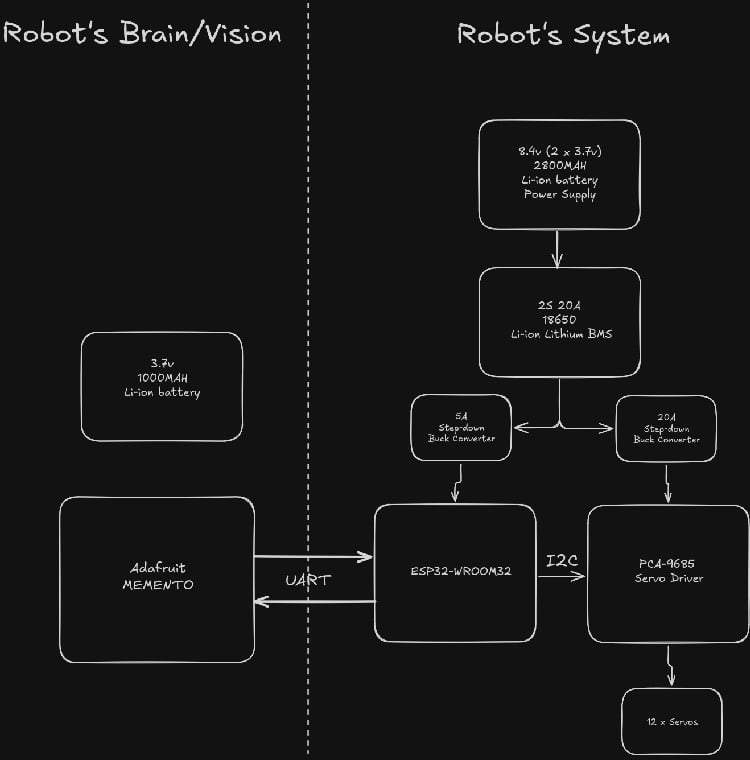

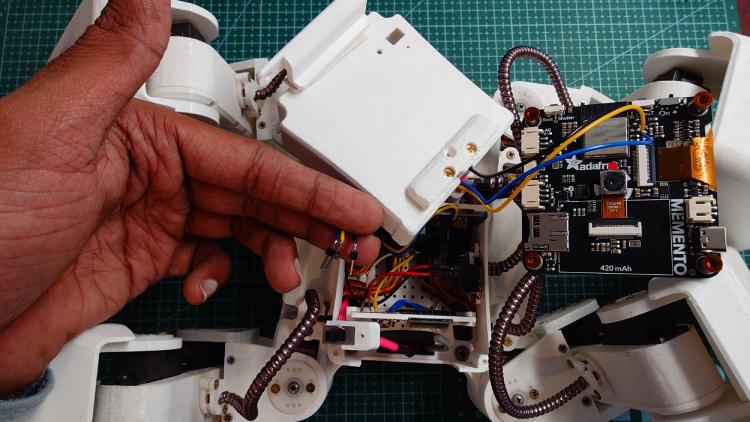

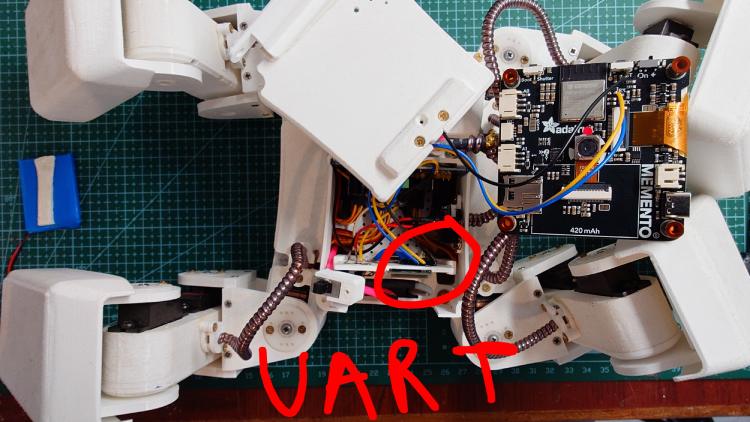

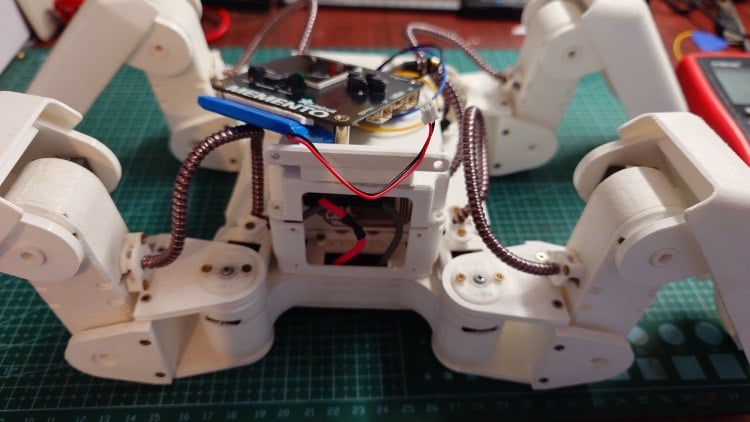

For the robot's action and motion, I'm using esp32 board along with Servo controller module to control 12 servos, 3 each leg for Coxa, Femur and tibia. In the current state the robot's kinematics model is so module and dynamic we can specify the x, y & z coordinates and it performs the actions.

For the robot's vision & brain I'm using Adafruit Memento.

Components Required

| Component Name | Quantity | Datasheet/Link |

| 3.7v 2800 mah Li-ion battery | 2 | - |

| PCA9685 16-Channel 12-Bit Servo Driver | 1 | - |

| Adafruit Memento | 1 | View Datasheet |

| ESP32-WROOM32 | 1 | View Datasheet |

Hardware Assembly

Software architecture

All control logic runs on the software and firmware side of the robot. The system is designed using clear, modular layers, inspired by established software architecture principles.

Core components include:

- ServoDriver

- LegDriver

- Trajectory

- MotionController

- RobotController

Hardware abstraction and leg control

The ServoDriver handles hardware-specific responsibilities. It receives joint angles as input and uses the appropriate low-level drivers to control individual servos. The LegDriver is completely hardware-agnostic. It operates purely on x, y, z foot positions, converts them to joint angles using the inverse kinematics model, and forwards those angles to the corresponding ServoDriver instances for the coxa, femur, and tibia. This separation ensures that hardware changes do not affect higher-level motion logic.

Trajectories and motion execution

How a leg moves through space is defined by a trajectory. A trajectory describes the path a foot should follow.

Supported trajectory types include:

- linear

- Bézier

- circular

- other parametric paths

Individual trajectories or groups of trajectories are executed by the MotionController.

The MotionController is aware of speed, distance, and timing, and ensures smooth execution of motion. It can also apply easing functions to control acceleration and deceleration.

Remote control layer

A dedicated remote control layer runs on a separate core of the CPU.

This layer is responsible for:

- receiving data via Bluetooth

- parsing incoming commands

- forwarding structured commands to the main control core

It includes modules such as BluetoothController and CommandParser, keeping communication concerns isolated from motion and control logic.

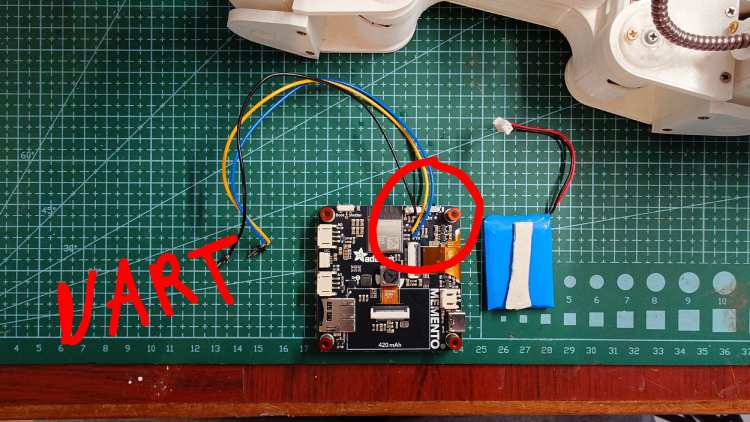

The Robot's Brain (Adafruit Memento):

Uses Adafruit's same face detection code to detect & recognize face and sends the high level commands to Robot for the required action on UART port.

The face detection and tracking is simple,

int face_center_x = x + (w / 2);

int screen_center_x = 240 / 2;

int error_x = face_center_x - screen_center_x;

if (abs(error_x) > 20)

{

if (error_x < 0)

{

// Send the turn left command to the Robot

// Ignore the 0.25 for it's just the percentage of how much the robot should turn from a total of 45 degrees, for the sake of simplicity & explaination it's hardcoded.

Serial1.println("#T-0.25;");

}

else

{

// Send the turn right command to the Robot

Serial1.println("#T0.25;");

}

delay(700);

}

else

{

// No need to send any command the face is centered with the Robot's face

Serial.println("CENTERED");

}The logic is simple, it checks the detected face's coordinates then checks at how much offset it is to center. If it is more than the threshold (20) then it will send the appropriate commands - Left if it's less than the value of the center, Right otherwise.

Right now only face detection is implemented as per time being. But however the is going to be continued, addition of other features as aforementioned.

Next things that are my priority is

- A proper fabricated PCB with proper communication channel for both mCU's to talk to each other.

- Then most big updates are on software side - Emotion detection, Playful, Medication reminders, etc.