By Pratyush Gehlot

Falls are a leading cause of injury among elderly and mobility-impaired individuals living alone. Traditional monitoring approaches rely on cameras or wearable devices — cameras raise significant privacy concerns in home environments, while wearables depend on the person remembering to wear them and keeping them charged.

This project presents a privacy-preserving fall detection and human presence monitoring system built on the ESP32-S3-BOX-3 platform with a ceiling-mounted LD6001 mmWave radar sensor. The radar emits millimeter-wave signals that reflect off the human body, producing a 3D point cloud with position, velocity, and signal quality data — without capturing any visual information.

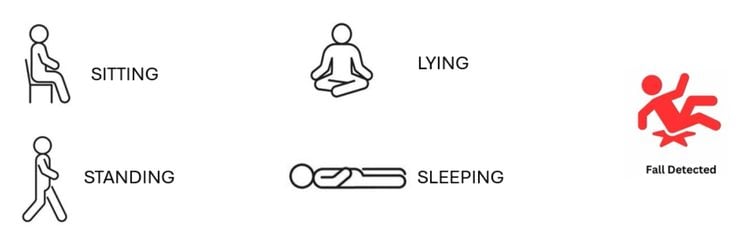

The system processes this point cloud in real-time through a multi-stage pipeline: noise filtering, DBSCAN spatial clustering, feature extraction (height, spatial spread, velocity variance), rule-based posture classification, and a multi-evidence finite state machine for fall detection. It classifies human posture into five states — standing, sitting, lying, sleeping, and fall — and raises an audible alarm when a fall is confirmed.

Key design goals of this project:

- Privacy — Radar-only sensing captures no images or audio of the monitored person

No wearables required — Passive detection with no cooperation needed from the subject - Real-time embedded processing — All detection runs on-device with no cloud dependency

- Multi-person support — Tracks up to 5 individuals simultaneously with independent fall detection per target

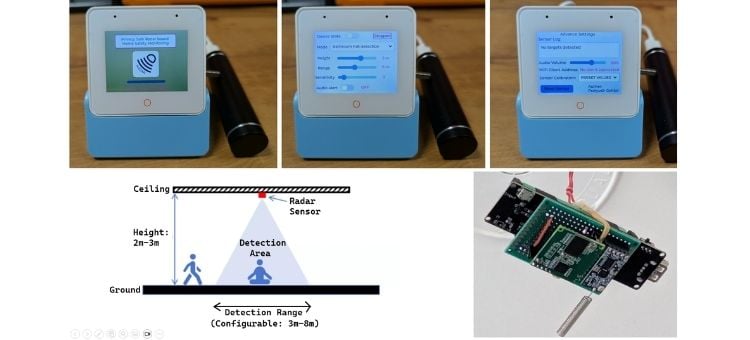

- Accessible interface — Touchscreen UI with posture display, configurable settings, and audio alerts

- Remote debugging — WiFi streaming of raw point cloud data to a PC 3D visualization tool for calibration and development

The system is designed for deployment in elderly care homes, assisted living facilities, hospital rooms, and private residences where continuous fall monitoring is needed without compromising the dignity and privacy of the occupant.

Components Required

| Component Name | Quantity | Datasheet/Link |

| ESP32S3 BOX3 | 1 | View Datasheet |

| HC12 | 2 | - |

| LD6001 60GHz Radar | 1 | - |

| 18650 Battey Shield | 1 | - |

| 18650 Li-Ion cell | 2 | - |

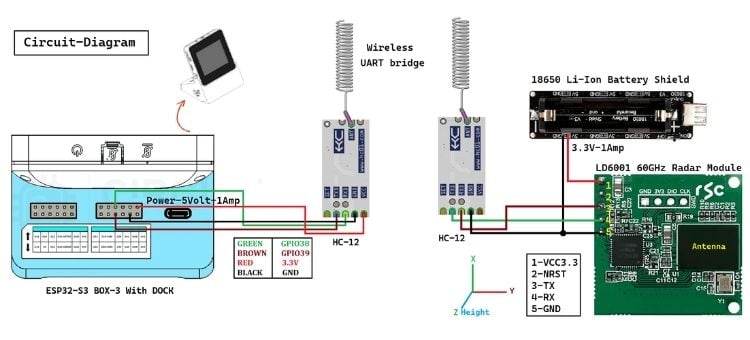

Circuit Diagram

Hardware Assembly

The hardware is split into two physically separate units connected wirelessly via HC-12 UART radio modules.

Part 1 — Ceiling Unit (Sensor Node)

Mounted on the ceiling, pointing downward. Battery-powered, no wires needed.

| Component | Role |

| LD6001 60GHz Radar | Detects humans, outputs point cloud over UART |

| HC-12 Wireless UART | Transmits radar serial data wirelessly to base unit |

| 18650 Li-Ion Battery Shield | Powers both radar and HC-12 at 3.3V / 1A |

Wiring: The battery shield's 3.3V and GND are shared to both the HC-12 and LD6001. The radar's TX → HC-12 RXD, radar's RX → HC-12 TXD.

Part 2 — Base Unit (Processing Node)

Sits on a desk/shelf. USB-C powered via power bank.

| Component | Role |

| ESP32-S3-BOX-3 + Dock | Runs detection pipeline, UI, audio alerts |

| HC-12 Wireless UART | Receives radar data wirelessly from ceiling unit |

Wiring: HC-12 TXD → GPIO38, HC-12 RXD → GPIO39, powered from the dock's 3.3V.

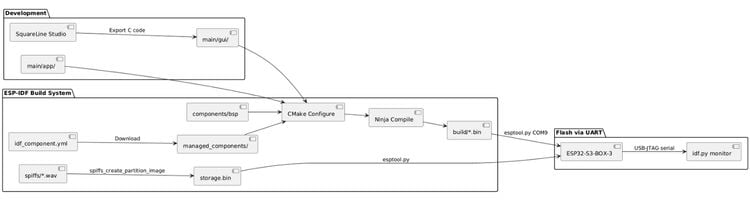

Software Flow

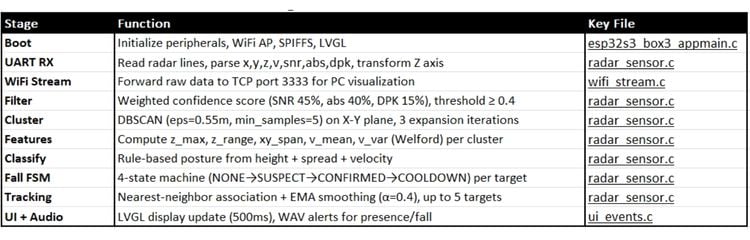

Software flow is split into 10 Stages, as explained below in the table -

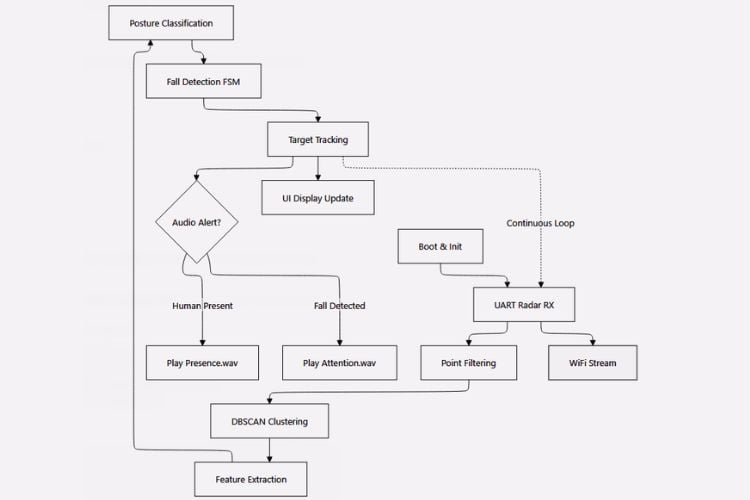

Below is the complete data flow of the ESP32-S3 radar human detection and fall monitoring system, from raw UART sensor data through the detection pipeline to UI display and audio alerts.

Boot And Initialization

•Mounts SPIFFS file system for audio files

•Initializes I2C bus for touch panel and audio codec

•Starts LCD display and LVGL graphics library

•Sets up WiFi AP mode (SSID: "ESP32S3BOX3_RadarSensor")

•Configures UART1 for radar sensor communication (115200 baud, GPIO 38/39)

•Creates FreeRTOS tasks for radar reception

•Displays boot animation with progress bar

•Plays bootaudio.wav during startup

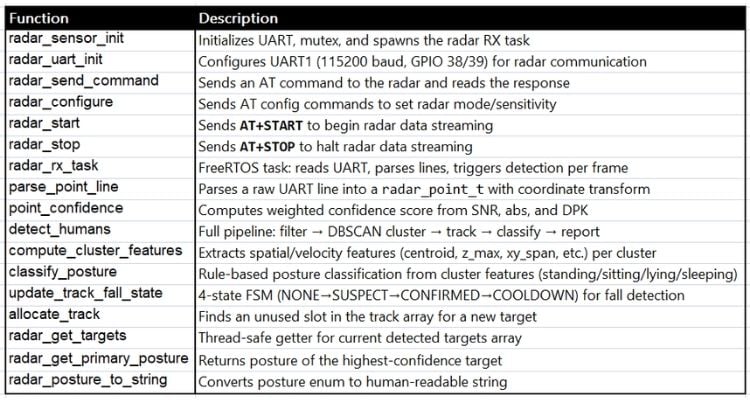

Key Functions

•app_main() - FreeRTOS application entry

•bsp_spiffs_mount() - File system setup

•bsp_display_start() - LVGL initialization

•wifi_stream_init() - TCP server on port 3333

•radar_sensor_init() - UART driver and RX task creation

Radar Data (LD6001) UART data Reception

The Radar module configured and controlled via UART using AT commands, where AT+START will stream point cloud frame over UART as plain-text lines, structured like this:

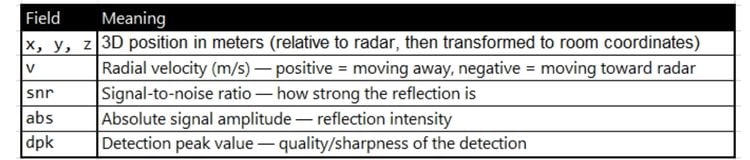

x=0.12 y=-0.35 z=1.80 v=-0.05 snr=22.3 abs=8.1 dpk=5.6

x=0.15 y=-0.30 z=1.75 v=-0.03 snr=19.8 abs=7.4 dpk=4.9

x=0.10 y=-0.40 z=0.45 v=0.01 snr=15.2 abs=6.0 dpk=3.2

-----PointNum=3

Each line is one reflection point detected by the 60GHz mmWave signal.

Per-point fields:

A person standing produces a vertical cluster of points (head, torso, legs). A person lying down produces a horizontal spread of low-z points. The snr, abs, and dpk values are combined into a confidence score to filter noise from real reflections.

FreeRTOS task continuously reads raw text data from the LD6001 radar sensor via UART and parses it into structured point cloud data.

•Runs in dedicated task radar_rx_task() with 8KB stack

•Reads UART bytes in 255-byte chunks with 100ms timeout

•Assembles line-buffered text (newline-delimited)

•Parses lines matching pattern: x=X,y=Y,z=Z,v=V,snr=S,abs=A,dpk=D

•Transforms coordinates for ceiling-mounted radar:

•x_world = x_raw + GRID_X/2 (center X axis)

•y_world = y_raw + GRID_Y/2 (center Y axis)

•z_world = GRID_Z - z_raw (invert Z for height from floor)

•Accumulates points

•Stores up to 128 points per frame in s_frame_points[]

Detection Pipeline

UART RX → Point Filter → DBSCAN Cluster → Feature Extract → Posture Classify → Fall FSM → Track & Smooth → UI/Audio

- Point Filtering — Weighted confidence score (SNR, abs, DPK), threshold ≥ 0.4

- DBSCAN Clustering — Groups nearby points into human targets (eps=0.55m, min_samples=5)

- Feature Extraction — Per-cluster z_max, z_range, xy_span, velocity mean/variance

- Posture Classification — Rule-based using height, vertical extent, and spatial spread

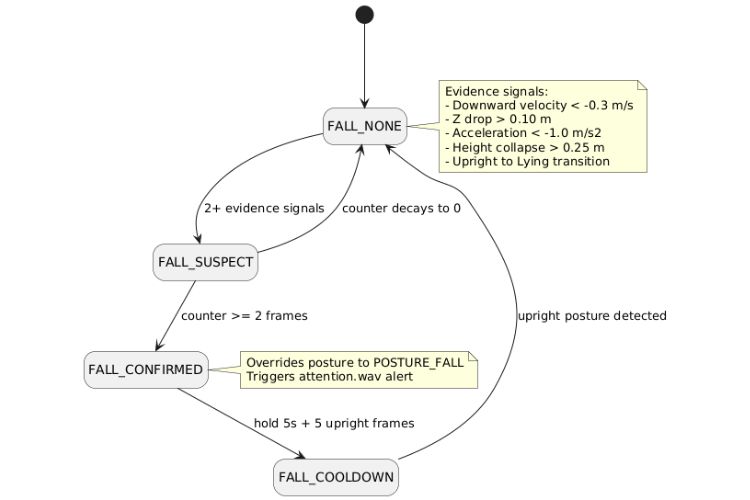

- Fall Detection — 4-state FSM (NONE → SUSPECT → CONFIRMED → COOLDOWN) with multi-evidence scoring

- Target Tracking — Nearest-neighbor association + EMA smoothing (α=0.4) across frames

Posture Classification

Doing Rule-based classifier using height and spatial spread thresholds.

Fall Detection State Machine

Key Radar Functions

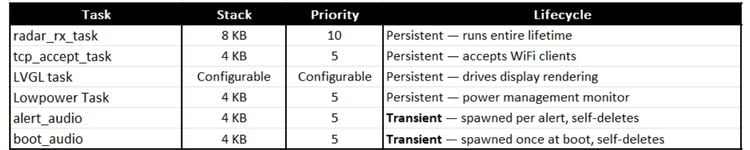

FreeRTOS Tasks

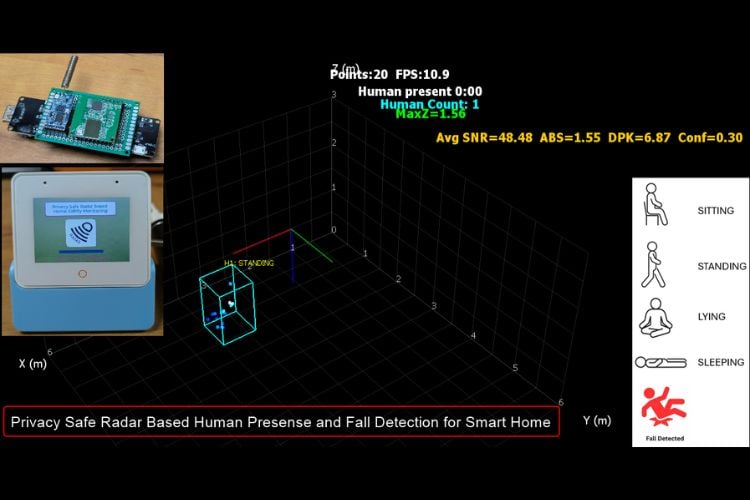

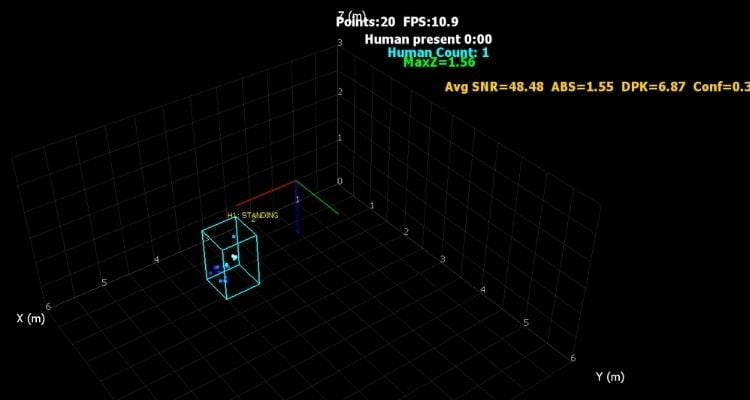

PC Client 3D Visualization : Streamed over WiFi AP [Debug/Training/Calibration Purpose]

A Python companion app connects to the ESP32's WiFi AP to visualize the radar point cloud in 3D.

The app connects to 192.168.4.1:3333 and renders the point cloud with cluster labels and posture overlay.

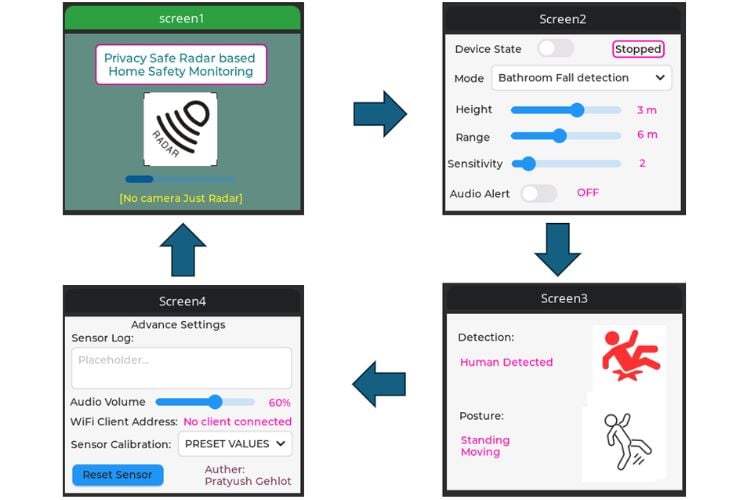

LVGL based UI Screens:

Build, Flash & Monitor Flow: