Edge Impulse Studio is a machine learning platform that enables developers to generate Machine Learning trained models in the cloud and deploy them on microcontrollers (e.g. Arduino and STM32), or single-board computers like the Raspberry Pi. Initially, Edge impulse didn’t support the Raspberry Pi but on April 2021 Edge Impulse announced their full support with Raspberry Pi. With this feature, you now can take data, train this data on the Edge Impulse platform, and then deploy the newly trained model back to your Raspberry Pi with zero lines of code. Find step-by-step guides in AI projects and tutorials to master machine learning and AI development

So, in this tutorial, we are going to train an image classifier model on Edge Impulse and then deploy it on Raspberry Pi. Previously we used Edge Impulse with Arduino 33 BLE sense to build a Cough Detection system. We have also trained some custom models using Tensorflow for Face Mask Detection and Object Detection using Tensorflow.

Components Required

Hardware

- Raspberry Pi

- Pi Camera Module

Software

- Edge Impulse Studio

Getting Started with Edge Impulse

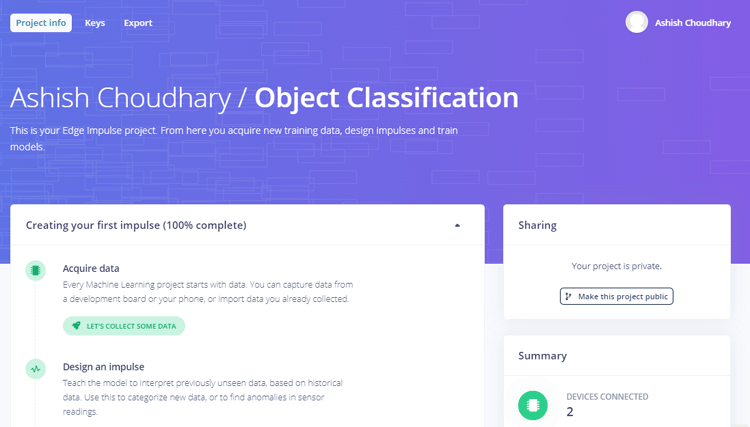

To train a machine learning model with Edge Impulse Raspberry Pi, create an Edge Impulse account, verify your account and then start a new project.

Installing Edge Impulse on Raspberry Pi

Now, to use Edge Impulse on Raspberry Pi you first have to install Edge Impulse and its dependencies on Raspberry Pi. Use the below commands to install Edge Impulse on Raspberry:

curl -sL https://deb.nodesource.com/setup_12.x | sudo bash - sudo apt install -y gcc g++ make build-essential nodejs sox gstreamer1.0-tools gstreamer1.0-plugins-good gstreamer1.0-plugins-base gstreamer1.0-plugins-base-apps sudo npm install edge-impulse-linux -g --unsafe-perm

Now, use the below command to run Edge Impulse:

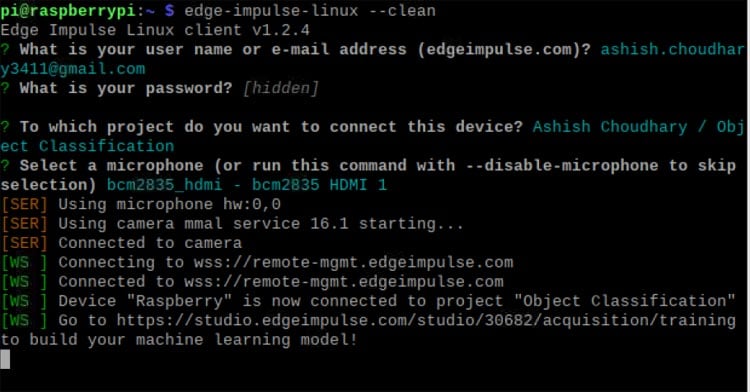

edge-impulse-linux

You will be asked to log in to your Edge Impulse account. You’ll then be asked to choose a project, and finally to select a microphone and camera to connect to the project.

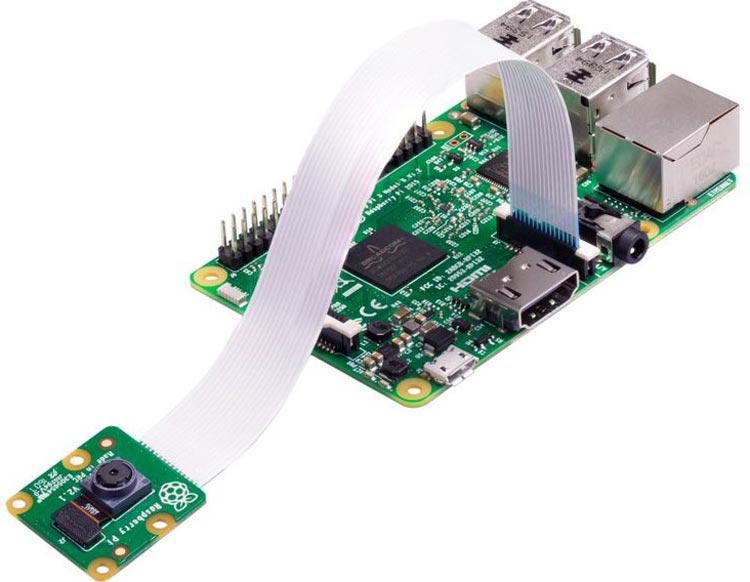

Now, as Edge Impulse is running on Raspberry Pi, we have to connect Pi camera Model with Pi for image collection. Connect the Pi camera as shown in the image given below.

Creating the Dataset

As mentioned earlier, we are using Edge Impulse Studio to train our image classification model. For that, we have to collect a dataset that has the samples of objects that we would like to classify using a Pi camera. Since the goal is to classify Onion and Potato, you'll need to collect some sample images of Onion and Potato so that it can distinguish between the two.

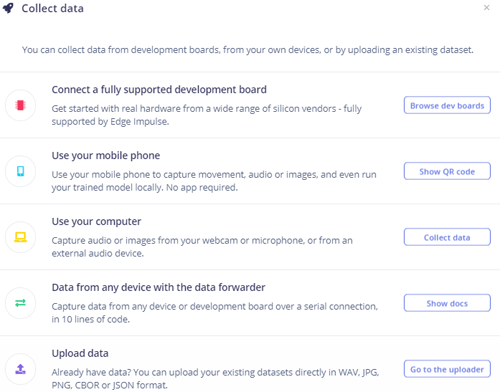

You can collect the samples by using a mobile phone, Raspberry Pi board, or you can import a dataset into an edge impulse account. The easiest way to load the samples into Edge Impulse is using your mobile phone. For that, you have to connect your mobile with Edge Impulse.

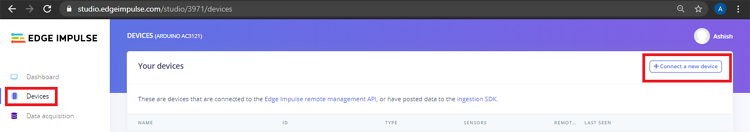

To connect your Mobile phone, click on ‘Devices’ and then click on ‘Connect a New Device’.

Now, in the next window click on ‘Use your Mobile Phone’, and a QR code will appear. Scan the QR code with your Mobile Phone using Google Lens or other QR code scanner apps. This will connect your phone with Edge Impulse studio.

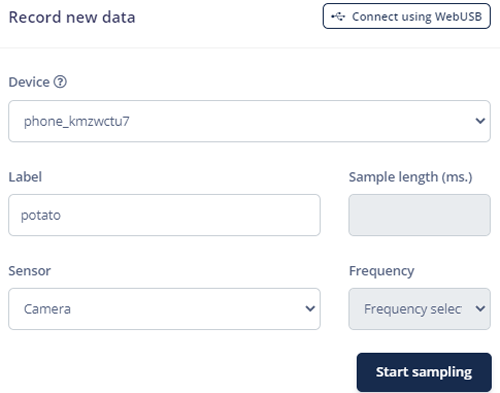

With your phone connected with Edge Impulse Studio, you can now load your samples. To load the samples click on ‘Data acquisition’. Now, on the Data acquisition page enter the label name and select ‘Camera’ as the sensor. Click on ‘Start sampling’.

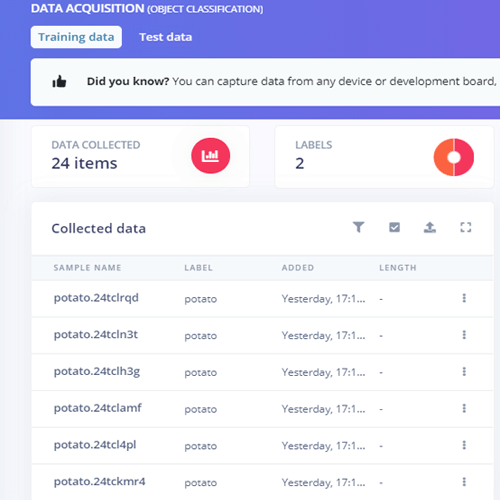

This will take and save the potato image to the Edge Impulse cloud. Take 10 to 20 images from different angles. After uploading the potato samples, now set the label to ‘onion’ and collect another 10 to 20 noise images.

These samples are for training the module. In the next steps, we will collect the Test Data. Test data should be at least 20% of training data, so collect the 3 samples of potato and onion.

Training the Model

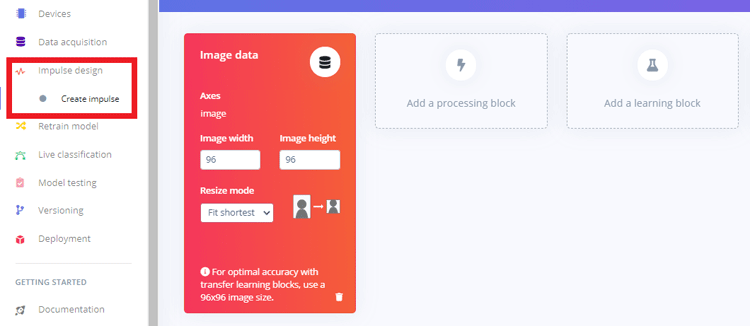

As our dataset is ready, now we will create an impulse for our data. For that, go to the ‘Create impulse’ page.

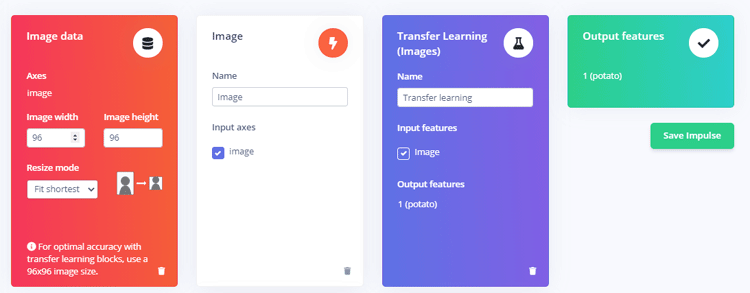

Now, on the ‘Create impulse’ page click on ‘Add a processing block’ and click on the “Add” button next to the “Image” block to add a processing block that will normalize the image data and reduce color depth. After that click on the “Transfer Learning (images)” block to grab a pre-trained model intended for image classification, on which we will perform transfer learning to tune it for our potato and Onion recognition task. Then click on ‘Save Impulse’.

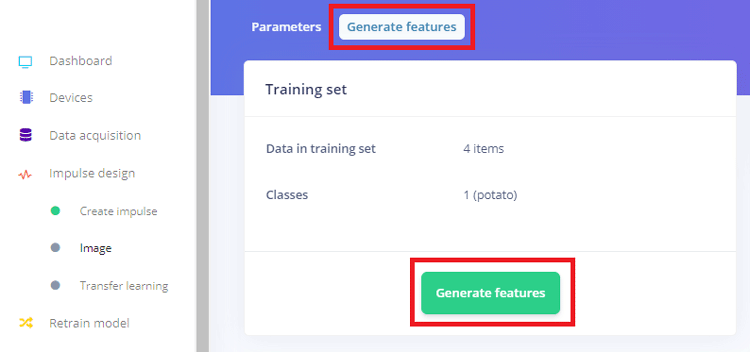

Next, go to the “Images” sub-item under the “Impulse design” menu item, and then click on the ‘Generate Features’ tab, and then hit the green “Generate features” button.

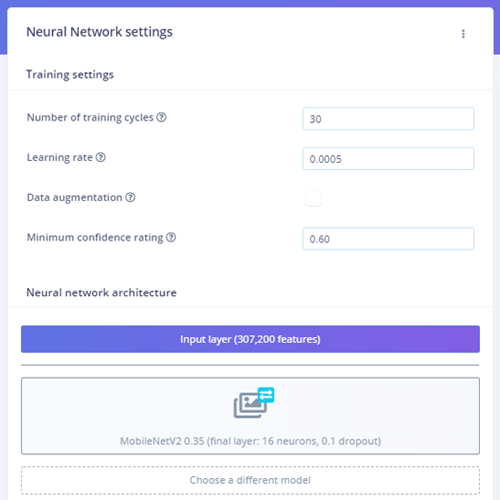

After that, click on the “Transfer learning” sub-item under the “Impulse design” menu item, and hit the “Start training” button at the bottom of the page. Here, we used the default MobileNetV2. You can use different training models if you want.

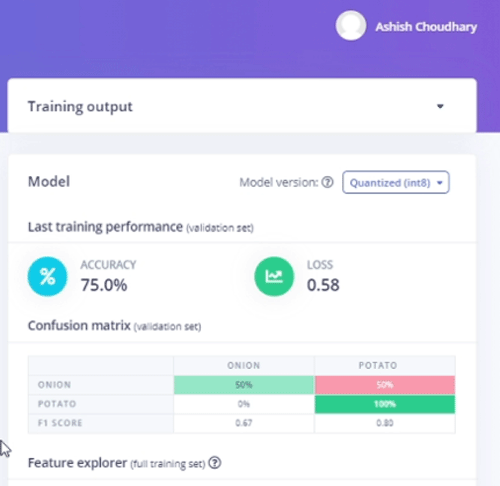

Training the model will take some time. After training the model, it will show the training performance. For me, the accuracy was 75% and the loss was 0.58.

We can now test our trained model. For that click on the “Live classification” tab in the left-hand menu, and then you can use Raspberry Pi camera to take sample images.

Deploying the Trained Model to Raspberry Pi

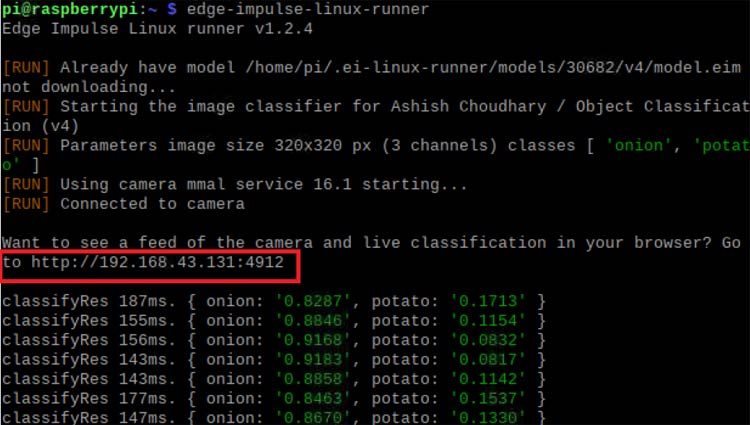

Once the training process is complete, we can deploy the trained Edge impulse image classification model to Raspberry Pi. For that, go to the Terminal window and enter the below command:

edge-impulse-linux-runner

If the edge-impulse-linux command is already running, then hit Control-C to stop it and then enter the above command.

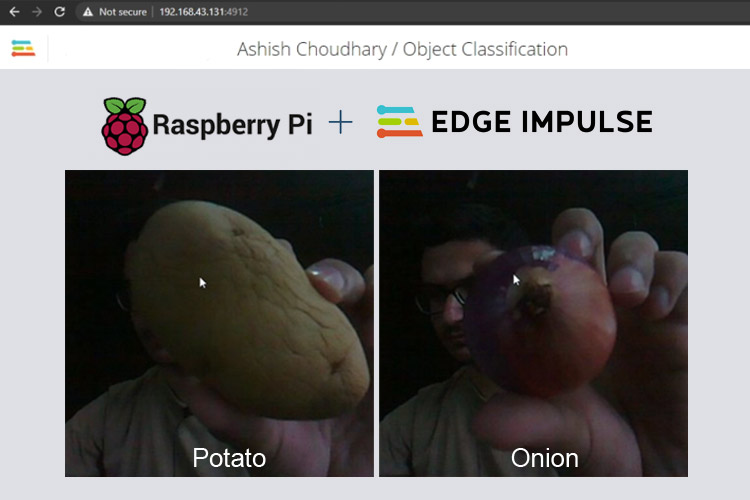

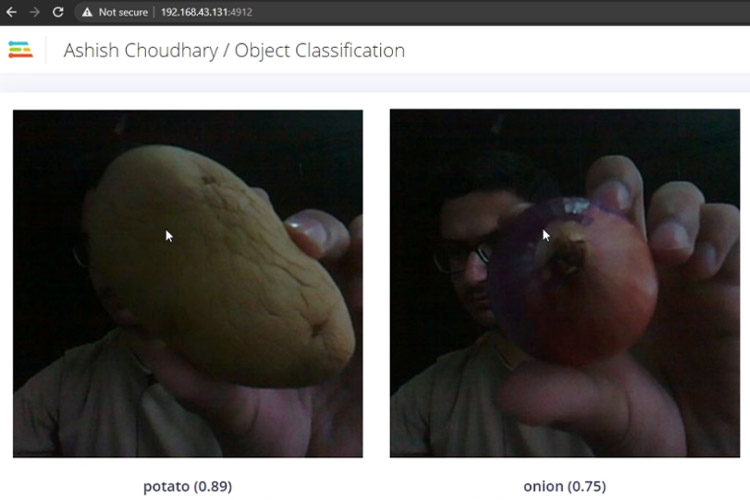

This will connect Raspberry Pi to the Edge Impulse cloud and download the recently trained model, and start up a video stream from your camera and look for potatoes and Onions. The results will be shown in the Terminal window.

You can also open up the video stream on the browser. For that, copy the URL (shown in the above image) from the terminal window and paste that into a browser.

This is how you can use Edge Impulse Studio to train a custom machine learning model. Edge Impulse is a great platform for non-coders to develop machine learning models. If you have queries related to Edge Impulse or this project, you can post them in the comment section. A complete working video is given below.

Hi, I have a project to control a lamp using continuous audio (alarm) using edge impulse in raspberry pi4. could you please suggest what should I do?